The relationship between a website’s robots.txt file and its XML sitemap is foundational to technical SEO, intended to be a harmonious partnership guiding search engine crawlers.However, a direct conflict arises when a folder explicitly disallowed in the robots.txt file is also meticulously listed within the sitemap.

Understanding Link Velocity: The Crucial SEO Metric You Can’t Ignore

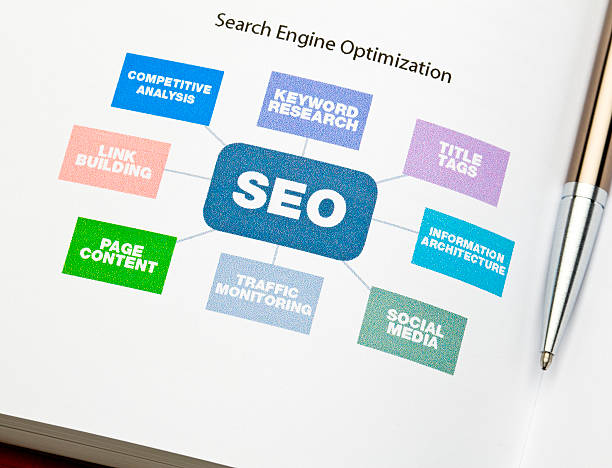

In the intricate and ever-evolving world of search engine optimization, few metrics generate as much curiosity and confusion as link velocity. At its core, link velocity is the rate at which a website acquires new backlinks over a specific period. Think of it not as a static number, but as a measure of momentum—the speed and consistency with which your site’s link profile is growing. This concept moves beyond merely counting links to understanding the narrative of your site’s popularity and authority in the eyes of search engines like Google. It is the difference between a steady, organic growth curve and a suspicious, jarring spike that could raise red flags.

To grasp why link velocity matters, one must first understand the foundational role of backlinks themselves. Search engines view links from other websites as votes of confidence. A link is essentially a referral, suggesting that the content is valuable, authoritative, and worth a user’s time. However, search algorithms are sophisticated; they don’t just tally votes. They analyze the pattern of those votes over time. Natural, organic growth in the digital landscape rarely happens overnight. A genuine piece of groundbreaking research or a viral marketing campaign might attract a rapid influx of links, but for most businesses, a steady, gradual accumulation is the norm. This is where velocity becomes a critical signal. A healthy link velocity typically reflects a consistent content strategy and genuine audience engagement, where new links are earned naturally as your digital footprint expands.

Conversely, an unnatural link velocity is often a glaring sign of manipulation, and this is precisely why SEO professionals should care deeply about monitoring it. A sudden, massive spike in backlinks, particularly from low-quality or irrelevant sources, is a classic footprint of black-hat SEO tactics like purchasing link packages or engaging in aggressive link schemes. To search engines, this pattern looks artificial and manipulative, an attempt to game the ranking system. The consequence can be severe, ranging from a loss of ranking positions to a manual penalty that can devastate a site’s visibility. In this light, link velocity acts as a diagnostic tool, helping webmasters identify potentially harmful SEO practices before they trigger an algorithmic or manual action.

But the importance of link velocity extends beyond merely avoiding penalties. A positive and steady link velocity is a powerful indicator of a successful, sustainable SEO strategy. It demonstrates to search engines that your site is consistently producing link-worthy content, engaging with its community, and growing its authority in a natural manner. This sustained momentum reinforces your site’s E-A-T (Expertise, Authoritativeness, Trustworthiness), which are key ranking factors. A gradual increase suggests that your content marketing, public relations, and digital outreach efforts are bearing fruit, creating a virtuous cycle where higher rankings lead to more visibility, which in turn leads to more natural links.

Ultimately, caring about link velocity is about embracing a long-term, quality-focused philosophy for your website’s health. It shifts the focus from a desperate quest for any link to a strategic pursuit of meaningful growth. By monitoring this metric through tools like Google Search Console and third-party SEO platforms, you gain invaluable insights into the narrative of your backlink profile. You can celebrate the success of a campaign that generates a healthy uptick, investigate and disavow suspicious links from an unnatural spike, and ensure your growth appears organic and deserved. In the grand calculus of search engine rankings, link velocity is the measure of your site’s pulse—its rhythm and vitality. Ignoring it means flying blind in a landscape where perception and pattern are everything. By understanding and managing your link velocity, you don’t just avoid danger; you actively build a more resilient, authoritative, and trustworthy online presence destined for lasting success.