In the pursuit of SEO success, accurately gauging the difficulty of ranking for a keyword is paramount.Many practitioners rely on competition data metrics provided by SEO tools, which offer a seemingly objective numerical score.

The Power of Link Intersect Analysis for Strategic SEO

In the intricate and competitive world of search engine optimization, success often hinges on understanding not just your own website’s profile, but the precise strategies that propel your competitors to the top. One of the most potent and insightful techniques for achieving this understanding is link intersect analysis. At its core, link intersect analysis is the process of comparing the backlink profiles of multiple competing websites to identify the specific linking domains that are common to them all, but absent from your own. This method moves beyond simple link quantity metrics to uncover the qualitative, foundational links that an entire industry niche deems valuable, providing a clear and actionable roadmap for the most impactful link-building campaigns.

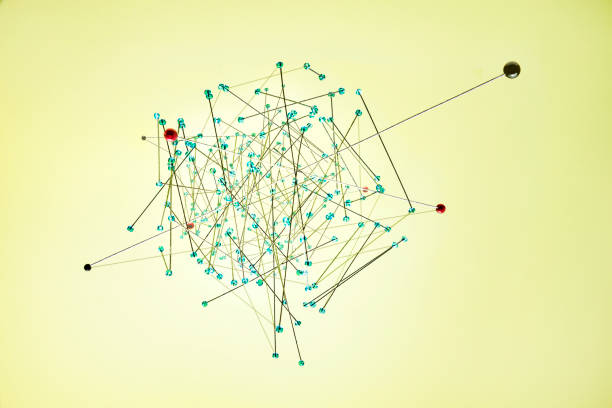

The process begins by selecting a group of three to five top-ranking competitors for a target keyword or topic. Using specialized SEO tools, an analyst exports the list of referring domains—the unique websites that link to each competitor. These lists are then cross-referenced using a Venn diagram-like methodology to isolate the domains that appear in every single competitor’s profile. This resulting set of common domains represents the “link intersect.“ These are not random or low-quality links; they are the consistent, authoritative endorsements that all leading players in the space have successfully earned. They often include industry-specific directories, respected publications, academic institutions, influential bloggers, and relevant associations that form the bedrock of topical authority. By identifying these shared sources, you effectively reverse-engineer the link acquisition strategy that underpins your competitors’ search visibility.

The true power of link intersect analysis lies in its unparalleled strategic clarity and efficiency. First, it eliminates guesswork and wasted effort. The digital landscape is vast, and pursuing any and all potential links is a resource-intensive endeavor with diminishing returns. Link intersect analysis provides a prioritized, targeted list of opportunities that have a proven, direct correlation with high rankings for your desired topic. This allows SEOs and marketers to focus their outreach and content creation resources on the highest-probability targets, dramatically increasing the return on investment for link-building activities. Instead of casting a wide net, you are spear-fishing in a well-stocked pond.

Furthermore, this analysis reveals the hidden structure of a niche’s link ecosystem. It answers the critical question: “Which authorities does this industry listen to?“ Securing a link from a domain that multiple competitors trust sends a powerful topical relevance signal to search engines, suggesting that your content is also a credible part of that conversation. This is far more valuable than acquiring a similar number of links from disparate, unrelated sources. By plugging into this existing network of authority, you accelerate your site’s journey toward E-A-T (Expertise, Authoritativeness, Trustworthiness) in the eyes of algorithms, which is crucial for competitive, high-value keywords.

Ultimately, link intersect analysis is powerful because it transforms a reactive SEO tactic into a proactive business strategy. It provides a data-driven blueprint for market entry or dominance. For a new website, it outlines the exact foundational links needed to establish credibility. For an established site stuck on page two, it highlights the critical authority gaps holding it back. By systematically pursuing the links that form the common backbone of your competitors’ success, you are not merely copying them; you are intelligently benchmarking against the market standard and building a sustainable, authoritative presence. In an SEO environment where quality decisively trumps quantity, link intersect analysis is the key to identifying and securing the quality links that truly move the needle.