In the intricate dance of search engine optimization, where content and code vie for algorithmic favor, two deceptively simple files serve as foundational blueprints for a website’s relationship with search engines.The XML sitemap and the robots.txt file, often relegated to technical checklists, are in fact profoundly instructive documents.

Essential Tools for a Comprehensive Technical SEO Audit

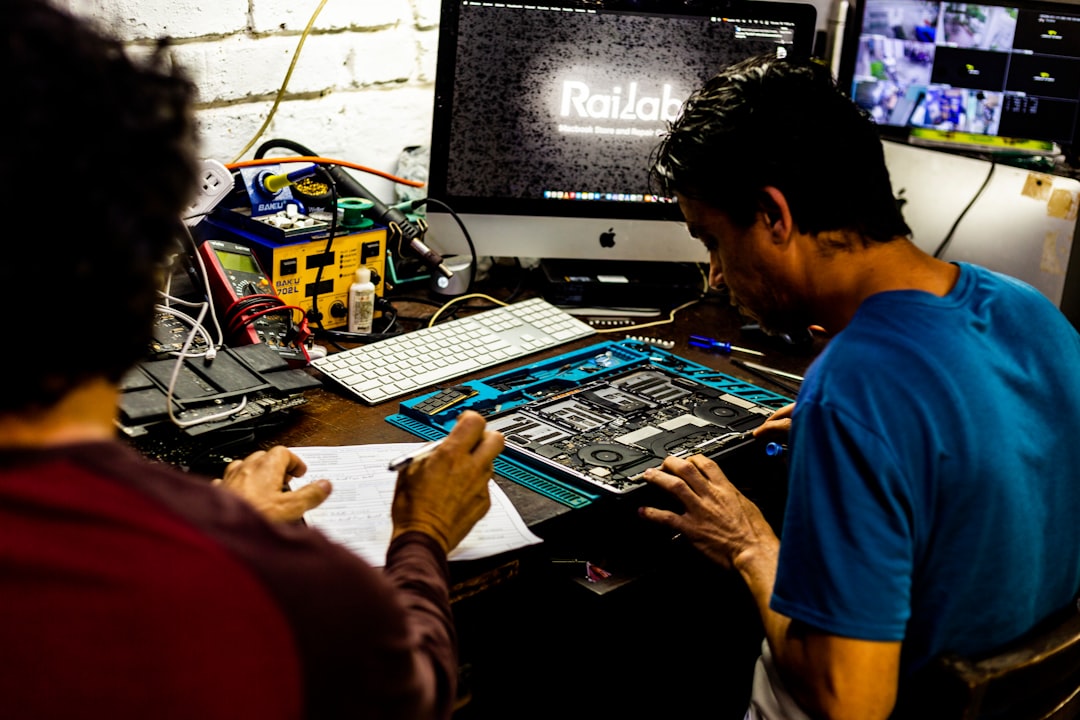

While Google Search Console is an indispensable starting point, providing unique insights directly from the search engine, a truly robust technical SEO audit requires a broader toolkit. Relying solely on it is akin to diagnosing a car’s health by only listening to the engine; you need specialized instruments to examine the chassis, electrical systems, and internal components. To move beyond surface-level insights and uncover the intricate issues impacting crawlability, indexation, and site performance, SEO professionals must integrate several other critical tools into their workflow.

Crawling and site architecture analysis form the bedrock of any technical audit, and for this, dedicated crawlers are non-negotiable. Tools like Screaming Frog SEO Spider or Sitebulb allow for deep, customizable crawls of a website, regardless of its size. These powerful applications excel at uncovering issues that Google Search Console might only hint at, such as intricate chains of redirects, orphaned pages with no internal links, duplicate content problems without canonical tags, and exhaustive lists of broken links. They provide a complete map of the site’s structure, revealing how link equity flows and identifying pages that are buried too deep in the hierarchy to be effectively crawled and indexed. This bird’s-eye view is fundamental for diagnosing why certain pages may not be performing as expected.

Performance and Core Web Vitals assessment has become a cornerstone of technical SEO, and specialized tools are essential for accurate measurement and diagnosis. While Search Console reports on field data, tools like PageSpeed Insights, WebPageTest, and Lighthouse offer lab-based testing with granular, actionable recommendations. They break down metrics such as Largest Contentful Paint, Cumulative Layout Shift, and Interaction to Next Paint, pinpointing specific render-blocking resources, oversized images, or inefficient JavaScript that hinder user experience. For larger sites, monitoring platforms like CrUX Dashboard or commercial suites from SEMrush or Ahrefs can track performance trends at scale, ensuring that optimizations have a lasting positive impact.

Backlink analysis, though often considered an off-page activity, is crucial for understanding a site’s technical health from an external perspective. A sudden, unexplained drop in rankings can sometimes be traced to lost links due to site migrations, changed URLs, or penalties. Tools like Ahrefs, Majestic, or Moz’s Link Explorer provide a comprehensive view of the backlink profile. They help auditors identify toxic links that might be harming the site, discover broken outbound links on one’s own site that create a poor user experience, and ensure that link equity from redirects or changed domain structures is being preserved correctly. This external lens complements the internal view provided by crawlers.

Finally, log file analysis represents perhaps the most advanced and revealing technique, offering a direct line of sight into how search engine bots actually interact with a server. By parsing server logs with tools like Splunk, Screaming Frog Log File Analyzer, or even custom Python scripts, auditors can see exactly which pages Googlebot is crawling, how frequently, and what status codes are returned. This data is unparalleled for identifying crawl budget waste—such as bots endlessly crawling low-value parameter-based URLs or getting stuck in crawl traps—and for verifying that important new pages or updated content are being discovered promptly. It closes the loop between what you think search engines see and what they actually experience.

In conclusion, a thorough technical SEO audit is a multi-faceted investigation that demands specialized instruments. By combining the direct feedback from Google Search Console with the deep crawling capabilities of desktop tools, the performance diagnostics of speed testing suites, the external intelligence of backlink analyzers, and the raw truth of server log files, SEOs can construct a complete and accurate picture of a website’s technical health. This comprehensive approach enables the identification and resolution of complex issues that would otherwise remain hidden, ultimately building a stronger foundation for organic search success.