Forget the vague buzzwords.When we talk about page engagement and interaction signals, we’re talking about the raw, unfiltered data that shows what real people actually do on your website.

Ensuring Accuracy: A Guide to Validating Your Structured Data Markup

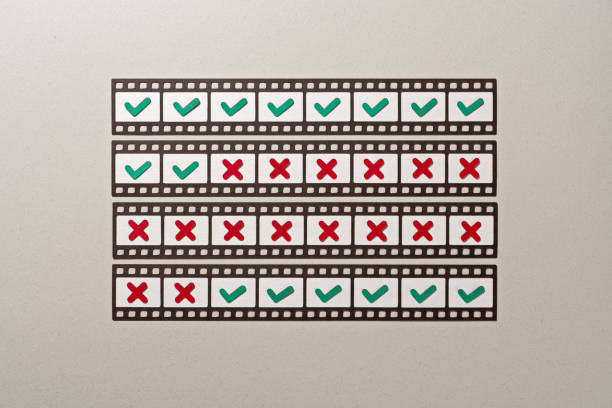

In the intricate world of search engine optimization, implementing structured data markup is a powerful technique to enhance your website’s visibility and clarity in search results. However, the mere presence of this code on your pages is not a guarantee of success. Like any programming language, structured data is susceptible to errors in syntax, logic, and implementation. Invalid or faulty markup can be ignored by search engines, negating your efforts and potentially missing out on rich results like featured snippets, recipe cards, or event listings. Therefore, the process of validation is not merely a technical step but a critical component of any SEO strategy, ensuring your structured data communicates effectively with search engine crawlers.

The cornerstone of structured data validation is the suite of free tools provided by Google itself. The most prominent of these is the Rich Results Test. This tool is exceptionally user-friendly and directly relevant, as it evaluates your markup specifically for eligibility to trigger Google’s rich results. You can simply paste the URL of a live page or a block of code directly into the tool. It will then parse the structured data, identifying any critical errors that would prevent rich result generation, as well as warnings for recommended improvements. The tool provides a visual preview of how your page might appear in search, offering immediate and practical feedback. For a more general analysis of all structured data on a page, irrespective of its use for rich results, Google’s legacy Structured Data Testing Tool remains a valuable resource for a comprehensive audit.

Beyond Google’s offerings, the Schema Markup Validator from Schema.org, the consortium that maintains the vocabulary for structured data, is an essential resource. This validator is agnostic to any specific search engine’s interpretation and focuses purely on the correctness of the Schema.org vocabulary and syntax. It is particularly useful for ensuring your markup adheres to the official definitions and properties, providing a baseline of technical correctness. For developers who prefer working within their own development environment, command-line tools and browser extensions offer continuous validation. Extensions can provide real-time feedback as you code, while command-line tools can be integrated into build processes to automatically check markup before deployment, catching errors early in the development cycle.

Validation, however, should not be viewed as a one-time event. It is an ongoing process that must be integrated into your website’s lifecycle. After the initial implementation, any subsequent changes to your website’s templates, content management system, or plugins can inadvertently introduce errors or remove existing markup. A routine audit schedule is highly advisable. Furthermore, after validating your markup, it is prudent to monitor its performance within Google Search Console. The Search Performance report includes a section specifically for “Enhancements,” which details how many of your pages have valid structured data, and can even alert you to new errors detected by Google’s crawlers over time. This provides a vital feedback loop between your validation efforts and real-world search engine interaction.

Ultimately, the validation of structured data is a multifaceted practice that blends automated tools with strategic oversight. It begins with the technical verification of syntax and vocabulary using dedicated validators, ensuring the language you are using is correct. It then progresses to platform-specific testing with tools like the Rich Results Test to confirm the intended visual outcomes. Finally, it is sustained through ongoing monitoring in operational dashboards like Search Console. By embracing this comprehensive approach, you transform your structured data from a static piece of code into a dynamic and reliable channel of communication with search engines. This diligence not only safeguards your investment in SEO but also maximizes the likelihood that your content will be presented in the most informative and engaging way possible to users across the web.